Artificial Intelligence, or AI, is the process of making computers or other machines that do tasks that usually require human intelligence. AI technology uses ways and methods such as machine learning, natural language processing, computer vision, robots, and neural networks. Data, and computing power, information is handled, choices are made, and results are made with formulas.

AI is important because it simplifies chores, helps people make decisions, increases speed and productivity, personalized experiences, changes healthcare, and makes people safer. AI systems have benefits such as speed and accuracy, ongoing operation, managing complexity, automating tasks that are done repeatedly, and reducing risk.

There are good things and bad things about artificial intelligence. These are the loss of jobs, the lack of human instincts and values, ethics and legal issues, reliance on data quality, and security risks.

Artificial intelligence is changing quickly and has many uses and results across businesses and areas. Its growth and creation require careful analysis of the possibilities and challenges it offers.

What Is Artificial Intelligence (AI)?

Artificial Intelligence (AI) implies the design and development of computer systems or devices that carry out tasks that normally require human intelligence. Artificial Intelligence (AI) involves emulating human cognitive processes by machines, allowing them to learn from experience, adapt to new inputs, and carry out tasks independently.

Artificial Intelligence comprises many techniques and strategies that allow machines to simulate human intelligence. These methods are machine learning, natural language processing, computer vision, robotics, expert systems, and neural networks. AI systems perceive and comprehend their surroundings, reason and make decisions, and act accordingly.

The term “Artificial Intelligence” was coined in 1956 at the Dartmouth Conference, where scientists and researchers discussed the possibility of constructing intelligent devices. AI has become a significant area of study and advancement since then.

AI is the creation of computer systems or machines with intelligence similar to humans, allowing them to perform tasks, learn, adapt, and make decisions. It consists of numerous techniques and possesses a long history of research and development aimed at attaining artificial intelligence capabilities.

How Does Artificial Intelligence Work?

There are several ways in which Artificial Intelligence (AI) works, including some steps that involve data entry, processing, learning, and decision-making. How AI works depends on the type of AI system and what it needs to do.

AI systems depend heavily on data, a key input that helps them learn, make predictions, and make choices. The information comes from a wide range of places, such as databases, different kinds of devices, and even the Internet. These sources help gather a large pool of information that helps AI systems work well and do what they should. The information comes from different places, such as records, devices, and the internet.

It needs to be preprocessed before being put into an AI system. The data is cleaned, missing values are handled, normalized, or scaled, and it is changed into a file for further processing.

The raw data has information that isn’t needed or isn’t important. Feature extraction is the process of choosing or getting important features from the data to make a good representation of the problem. The goal is to lower the number of dimensions and make the next AI program work better.

Artificial Intelligence Algorithms and Models AI systems use algorithms and models depending on the task. AI systems use algorithms and models. Machine learning methods such as linear regression, decision trees, and support vector machines, as well as deep learning models such as convolutional neural networks and recurrent neural networks, are used. These algorithms and models learn patterns, connections, and rules by being taught on data that has been named or not.

The AI system is taught by giving it tagged data, where the input data is linked to the desired output in supervised learning. The AI program uses these cases to learn how to guess or classify data it hasn’t seen before. It is called “unsupervised learning,” when an AI system finds patterns or structures in data without being told what they are. Reinforcement learning includes teaching an AI system how to deal with its surroundings through trial and error, to maximize a payoff signal.

Testing shows how well the AI model that has been taught works. It depends on the job; accuracy, precision, memory, or F1 score are used to measure performance. The hyperparameters are changed, the design is changed, or the amount of training data is increased if the model doesn’t do as well as wanted.

The AI system uses new data to make guesses, groupings, or choices once taught and improved. The system looks at the given data, handles it using the model it has learned, and gives a result or does something else based on its code.

Iterative learning is a way that AI systems keep learning and getting better over time. They get new information to help them improve their models and adapt to changing situations. Iterative learning lets AI systems get smarter, more accurate, and better at what they do over time.

The AI system is a computer-based system that uses AI technology to perform specific jobs. It includes the methods, data, and computing systems needed to handle information, make choices, and give results based on AI concepts.

Data gathering, preparation, feature extraction, algorithm selection and training, model evaluation, decision-making, and repeated learning are all parts of the AI process flow. AI systems use repetitive processes to learn from data and improve at certain jobs.

What Are the Main Branches or Types of AI?

There are seven main types of Artificial Intelligence, including Computer Vision, Fuzzy Logic, Robotics, Machine Learning, Expert Systems, Neural Networks/Deep Learning, and Natural Language Processing.

The Computer Vision branch of AI allows computers to interpret and understand visual information from the real world, replicating human vision capabilities. It’s crucial in facial recognition systems, autonomous vehicles, and medical imaging applications, where insights are derived from visual data.

Fuzzy logic helps handle uncertain or imprecise information. It’s vital in areas such as control systems, decision-making tools, and weather forecasting, where it helps in managing ambiguity and enhancing system robustness.

AI-driven robotics are paramount in industries such as manufacturing, healthcare, and space exploration. They allow precision, endurance, and speed beyond human capability, carrying out repetitive tasks, surgical operations, or exploring unreachable terrains.

Machine learning algorithms learn from and make data-based decisions, improving over time. It’s essential in various sectors, including finance for fraud detection, retail for customer segmentation, and healthcare for predictive diagnostics.

AI in Expert systems simulates the decision-making ability of a human expert. They’re used in various fields, such as medical diagnosis, stock trading, and weather prediction, providing valuable insights and predictions that support decision-making.

Deep Learning/Neural Networks is a subtype of machine learning based on artificial neural networks that excel in pattern recognition and processing massive amounts of data. It is essential in speech recognition, recommendation systems, and natural language processing.

Natural Language Processing (NLP) is a basic component of AI that allows computers to comprehend and react to human language. It powers applications such as voice-assisted systems, translation services, and sentiment analysis tools.

The branches of AI technology play a crucial role in augmenting operational efficiency, making informed decisions, enhancing the customer experience, and creating new avenues for innovation and development in various industries.

1. Computer Vision

Computer vision is a type of artificial intelligence (AI) that works on making it possible for machines to understand, study, and understand visual information from pictures or movies.

Computer Vision programs use picture processing, pattern recognition, and machine learning to pull out features, find items, identify trends, and make sense of visual data.

AI improves Computer Vision by letting computers learn from big data sets, get better at detecting and recognizing objects, and make smart choices and readings based on what they see.

AI improves computer vision by streamlining chores such as visual analysis, letting objects be found and tracked in real-time, making it easier to understand images and videos, and making it possible to use apps such as self-driving cars, monitoring systems, and medical imaging.

2. Fuzzy Logic

Fuzzy logic is a scientific method for making decisions when there is a lot of confusion and fuzziness. It allows numbers to be partially true or partially wrong.

Fuzzy Logic uses language factors, membership functions, and fuzzy rules to describe and express doubt and imprecision. It thinks based on degrees of truth instead of just yes or no.

AI uses Fuzzy Logic with machine learning methods to make clever systems that deal with unclear and inaccurate data, make decisions based on fuzzy rules, and change their behavior based on input.

AI improves fuzzy logic by letting computers learn fuzzy rules from data, deal with situations that are complicated and unclear, and make smart choices in real time. It makes it easier and more accurate to make decisions when there is doubt.

3. Robotics

Robotics is the study of how to create, build, and run tools or robots that do jobs on their own or with some help.

Robotics uses sensors, motors, and software programs to make robots that sense their surroundings, make choices, and do physical jobs.

Robotics is made better by AI because it gives robots mental skills such as sensing, learning, planning, and making decisions. AI lets robots adapt to changing surroundings, learn from their mistakes, and communicate with people and computers.

AI helps robotics by making it possible for robots to do things such as handle difficult tasks, manage routine tasks, improve accuracy and precision, make things safer, and work well with people in many fields, such as industry, healthcare, and research.

4. Machine Learning

Machine learning is a branch of artificial intelligence focusing on models and algorithms that let computers learn from data and predict or decide on things without being explicitly programmed.

Data is processed and analyzed by machine learning algorithms, which then look for patterns and use those patterns to predict or categorize data. The algorithms train their internal parameters to perform better.

Machine learning techniques are used in AI to enhance learning capabilities, automate decision-making, and allow systems to adapt and get better over time.

AI enhances machine learning by allowing richer and more complicated learning architectures, effectively managing massive datasets, automating feature extraction, and integrating with other AI techniques for improved performance in some applications.

5. Expert Systems

Expert systems are artificial intelligence (AI) systems that mimic human experience and knowledge in certain areas to come up with smart answers or suggestions.

Expert systems comprise a database of expert knowledge and a set of rules or reasoning engines that use that knowledge to think and make choices.

AI improves expert systems by adding machine learning methods that use data to learn and make better choices or suggestions. AI lets other AI methods, such as natural language processing and computer vision, be added to Expert Systems to make them more useful.

AI improves expert systems by letting them handle large and complex knowledge bases, learn from new data or experiences, adapt to changing conditions, and provide more accurate and personalized solutions or advice in healthcare, banking, and customer service.

6. Neural Networks/Deep Learning

Neural Networks or Artificial Neural Networks are computer models based on how the human brain is built and works. Deep Learning is a subset of Neural Networks that includes training models with many levels to learn complex patterns and representations.

Neural networks are made up of nodes, or artificial neurons, that are linked to each other and work together to process and send information. Deep Learning models learn structured data representations by teaching and changing the links between neurons.

AI uses neural networks and Deep Learning with other AI methods, such as large-scale data processing, optimization algorithms, and parallel computing, to train and apply models that perform tasks such as picture and speech recognition, natural language processing, and decision-making.

AI improves neural networks and Deep Learning by allowing for bigger and more complicated models, faster training and inference processes, better accuracy and generalization, and the ability to handle big data and difficult problems in healthcare, banking, and autonomous systems.

7. Natural Language Processing

Natural Language Processing (NLP) is a branch of AI that focuses on allowing machines to understand, interpret, and generate human language.

NLP involves processing and analyzing textual data, extracting meaning, and deriving insights from language patterns and structures. It encompasses text classification, sentiment analysis, machine translation, and question-answering tasks.

AI enhances NLP by combining it with machine learning algorithms, deep learning models, and linguistic rules to improve language understanding, automate language generation, and allow applications such as chatbots, virtual assistants, and language-based analytics.

AI improves natural language processing as it allows machines to understand and generate human language more accurately, handle diverse languages and dialects, interpret context and intent, and provide personalized and intelligent responses in areas such as customer service, information retrieval, and content generation.

AI enhances these branches by providing advanced learning capabilities, automation, adaptation to changing conditions, and improved accuracy, leading to numerous benefits across domains such as healthcare, finance, robotics, and language processing.

What Are the Key Components Required for Building an AI System?

The key components of developing an AI system are data, processing information, making choices, and providing outputs based on AI principles.

Data is the foundation of AI systems. It serves as the input for training models and making predictions or decisions. High-quality, diverse, and representative data is crucial for effective AI system development.

AI algorithms are the mathematical models and techniques that allow the system to learn patterns, make predictions, or make decisions. Different algorithms are used based on the task and the type of AI system being developed, such as machine learning algorithms, optimization algorithms, or rule-based algorithms.

AI systems require computational resources to process data, train models, and perform complex calculations. It includes CPUs (Central Processing Units), GPUs (Graphics Processing Units), or specialized hardware such as TPUs (Tensor Processing Units) to accelerate AI computations.

An AI system requires infrastructure to store and manage data, run algorithms, and deploy models. Servers, cloud platforms, storage systems, and networking infrastructure are included.

Training an AI system involves feeding it with labeled or unlabeled data and using algorithms to learn patterns, relationships, or rules. The training process aims to optimize the system’s performance and make it capable of making accurate predictions or decisions.

Model Deployment AI is deployed in a production environment to make real-time predictions or decisions once trained. It involves integrating the model into the system’s architecture, ensuring scalability, reliability, and efficiency.

Evaluating the performance of an AI system is crucial to ensure its accuracy and effectiveness. Evaluation metrics are defined based on the specific task, and testing is conducted using representative datasets or simulated scenarios.

AI systems often undergo iterative improvement based on feedback and new data. That requires retraining models, fine-tuning parameters, and revising the system to enhance its performance and adaptability.

What Is Machine Learning?

Machine Learning is a branch of Artificial Intelligence (AI) that focuses on making algorithms and models that let computers learn from data and make guesses or choices without being told to. It’s a data-driven method where systems are taught information to naturally learn trends, connections, and rules.

Clear steps are given to solve a specific problem in standard computing. The system learns from cases or data to find the underlying trends and make guesses or choices about new, unknown data instead of directly writing the answer. The three main types of Machine Learning methods are guided learning, uncontrolled learning, and reinforcement learning.

Supervised learning is when the system is taught with labeled data, where the input data is paired with the desired output or goal variable. The program uses these cases to learn how to guess or classify data it hasn’t seen before. A supervised learning program learns to tell whether new pictures are of cats or dogs using pictures of cats and dogs that have been named.

Unsupervised learning is when the system learns from data that has not been identified. The goal numbers for the given data do not exist. The program learns to find patterns or structures in the data without clear direction. Common jobs in unsupervised learning include grouping, where the algorithm groups data points that are similar together, and dimensionality reduction, where the algorithm lowers the number of variables or features while keeping the important information.

Reinforcement learning is a way to teach an AI system how to deal with its surroundings to get the most out of a reward signal. The system learns by doing things and getting feedback from those things through awards or punishments. The goal is to find the best plan or policy that maximizes the total gain over time. Reinforcement learning has been used successfully in game playing, robots, and systems that make decisions on their own.

Machine Learning programs use many different methods, such as linear regression, decision trees, support vector machines, neural networks, and deep learning models. These programs look at the given data, look for trends, and build models that predict and draw conclusions from new data that hasn’t been seen before.

Machine learning has many uses in the real world, such as recognizing images and voices, handling natural languages, making recommendations, finding scams, making cars drive themselves, and diagnosing diseases. It changed businesses and allowed breakthroughs by automating chores, pulling insights from data, and better decision-making processes.

What Is the Relationship Between AI and Machine Learning?

Machine learning falls under the umbrella of AI and is, therefore, closely tied to the field. They are not the same, although they are related.

Artificial intellect is a wide topic that covers the creation of computer systems or machines that execute activities that need human intellect. Learning, reasoning, problem-solving, and decision-making are all aspects of intelligence that need to be emulated to achieve the goal. Machine Learning is one example of AI technology, including rule-based and expert systems as well as NLP, CV, and robots.

The subject of Artificial Intelligence, known as Machine Learning, is concerned with teaching computers to draw conclusions and draw predictions from data on their own, without being specifically taught to do so. The method relies heavily on collected data to train robots to do better at a certain activity. Pattern recognition, prediction, data clustering, and the discovery of latent structures in data are just some of the many tasks that Machine Learning algorithms do.

Scope, learning capacity, flexibility, automation, adaptability, and a data-driven approach are all features of AI and Machine Learning.

The larger definition of artificial intelligence includes all the methods and processes used to make smart computers. Its specialization is machine learning.

Algorithms developed for Machine Learning aim to help computers learn from experience and improve at certain jobs as more data is collected. One of Machine Learning’s most appealing qualities is its capacity to learn.

The scope of AI’s adaptability extends well beyond Machine Learning to include other methods, such as rule-based and expert systems. It allows AI systems to adopt various strategies, each tailored to the specifics of the challenge at hand.

Artificial Intelligence and Machine Learning aim to automate tasks that previously required human intelligence. Machine learning algorithms effectively automate jobs by recognizing patterns and then drawing inferences or taking action without further programming.

Algorithms that use Machine Learning learn from previous experiences and adapt to new information to increase their efficiency and accuracy over time. They adapt to changing circumstances and make better forecasts or judgments.

Machine Learning significantly depends on data to discover patterns and train models. Machine learning algorithms perform as well as their training data does.

Machine Learning is an area of artificial intelligence that focuses on the development of algorithms that allow computers to learn from data. Machine learning is essential to AI since it allows computers to learn. Machine Learning is only one method that AI uses to mimic human intellect.

How Does Natural Language Processing (NLP) Contribute to AI?

Natural Language Processing (NLP) helps AI in seven ways, including Language Understanding, Language Generation, Information Retrieval, Conversational AI, Sentiment Analysis and Opinion Mining, Text Summarization and Extraction, Language Adaptation and Personalization, and Conversational AI.

Language Understanding and Natural Language Processing let machines understand and examine written and spoken human words. It includes tasks such as labeling text, recognizing named things, figuring out how people feel about something, tagging parts of speech, and understanding phrasing. AI systems figure out what people are talking about, find the most important information, and understand the context by understanding words.

Language Generation and Natural Language Processing make it possible for machines to make a language that sounds human. Natural Language Processing covers things such as writing new text, describing it, turning it into another language, and making chat systems. NLP models help people come up with answers that make sense and fit the situation. It makes it possible for AI to work well with people.

Natural Language Processing (NLP) helps artificial intelligence systems find the information they need by going through a lot of text to find what they are looking for. It includes tasks such as reading papers to find information, asking questions, and making suggestions about what to read. NLP systems figure out what information people are looking for and use text sources to find the most relevant information.

Natural Language Processing plays a vital role in developing conversational AI systems, such as chatbots and virtual assistants. These systems rely on NLP techniques to understand user queries, provide accurate responses, and engage in natural, human-such as conversations. NLP allows AI systems to process user input, handle variations in language, and respond appropriately in real time.

NLP lets AI systems read and understand how people feel and what they think about something in the text. Mood analysis techniques help figure out if a review, social media post, or customer feedback is positive, negative, or neutral. Its information helps companies figure out what the public thinks, how happy their customers are, and how to make decisions based on facts.

The NLP methods make it possible for big amounts of text data to be automatically summed up and key information to be pulled out. AI systems summarize articles, reports, and other papers by pulling out the most important facts and details or giving short recaps. NLP systems find the most important things, events, or thoughts. It saves time and effort when dealing with information.

NLP makes AI systems understand how to respond to different languages, accents, or wants. AI models are taught to understand and use language that is specific to a person or place using NLP. It lets experiences be changed, languages be translated, and AI apps work in different places.

Natural Language Processing is an important part of AI that lets machines understand, create, and talk to each other in human language. It makes it easier for people and AI systems to talk to each other. It makes it easier to process information and lets AI be used in chats, translating languages, figuring out how people are feeling, and suggesting material.

What Is the Role of Neural Networks in AI?

Neural networks are an important part of AI because they let robots learn from data, spot patterns, and make predictions or choices. Neural networks have five uses including learning from data, recognizing patterns, nonlinear mapping, deep learning, and being able to change and generalize.

Neural Networks are made to learn from data through a process called training. The network is shown both the input data and the output or goal values that are wanted during training. It changes the weights that connect the artificial neurons based on how far off the expected output is from the desired output. Neural Networks learn to guess the underlying relationships and trends in the data by updating these weights over and over again.

They automatically learn from complicated data and pull out features that help them identify objects, recognize speech, or sort data into different groups. Neural Networks find hierarchical and nonlinear connections in data by using their layered structure and interconnected units. It makes them good at recognizing patterns.

Neural networks learn relationships between inputs and outputs that aren’t straight lines. They handle complicated and nonlinear relationships in the data, which are hard for standard algorithms to understand because they are flexible. Neural Networks solve complex AI problems because they are built-in layers and have activation functions at each unit. It lets them model highly nonlinear and complicated maps.

Deep Learning, a branch of AI that involves training models with many layers, is built on NNs. Deep Learning designs such as convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs) have done very well in areas such as computer vision, natural language processing, and speech recognition. Neural Networks learn hierarchical representations that Deep Learning models use to pull out high-level features and figure out complex relationships in the data.

Neural networks are flexible and apply what they’ve learned to data they haven’t seen before. NNs use the relationships and patterns they’ve learned to make predictions or choices about new data they haven’t seen before once trained. Neural Networks work well in real-world applications because they deal with noisy or missing data, accept differences, and adapt to different input scenarios.

Neural Networks are an important part of AI because they let computers learn from data, spot patterns, and make predictions or choices. They are very good at jobs that involve recognizing patterns, nonlinear mapping, and complex data relationships. Deep Learning is based on neural networks, which make it possible to make AI models that solve hard problems in areas such as computer vision, natural language processing, and speech recognition.

What Are Some Examples of AI Applications in Various Industries?

Artificial Intelligence (AI) has different applications in different industries, including AI in healthcare, finance, transportation, manufacturing, retail, and customer service.

Artificial Intelligence (AI) systems analyze medical images such as X-rays, MRIs, and CT scans to assist in the diagnosis of diseases or abnormalities. It is used to analyze vast amounts of data and identify potential drug candidates, accelerating the drug discovery process. It helps in analyzing patient data and genetic information to provide tailored treatment plans and personalized healthcare recommendations.

Artificial Intelligence algorithms identify patterns and anomalies in financial transactions to detect fraudulent activities and prevent financial crimes. The AI-powered algorithms analyze market data and make real-time trading decisions to optimize investment strategies. The chatbots and virtual assistants powered by AI provide personalized customer support and answer queries related to banking and financial services.

Artificial Intelligence allows self-driving cars and autonomous vehicles by processing sensor data, analyzing the environment, and making real-time driving decisions in the transportation sector. AI algorithms optimize traffic flow, manage congestion, and improve transportation systems through real-time data analysis and decision-making. AI systems analyze sensor data from vehicles and infrastructure to predict maintenance needs and optimize maintenance schedules. AI has become an integral component in modernizing and enhancing transportation services.

Artificial Intelligence became a key to efficiency and innovation in manufacturing. AI is used in quality control where AI-based systems inspect products and identify defects or deviations from quality standards during the manufacturing process. AI’s work extends to supply chain optimization, as AI algorithms optimize inventory management, demand forecasting, and logistics, thus boosting supply chain efficiency as a whole. AI allows robots to undertake tasks such as assembly, packaging, and material handling, enhancing productivity and reducing costs. The contribution to manufacturing is truly multi-faceted and game-changing.

Artificial intelligence is reshaping customer experiences and enhancing efficiency in the retail sector. AI-powered recommendation systems analyze customer data and behavior to provide personalized product recommendations, improving customer engagement and sales. It allows visual search capabilities, allowing customers to find products by uploading images or using camera input. Its algorithms analyze sales data and external factors to optimize inventory levels, reducing stockouts and overstocking.

Artificial Intelligence has become a fundamental tool for delivering improved and more efficient services. AI-powered chatbots and virtual assistants provide automated customer support and assist with inquiries, enhancing customer service experiences. Its systems analyze customer feedback, social media posts, and reviews to understand customer sentiment and improve brand perception.

AI’s versatility allows it to be applied across various domains, ranging from healthcare and finance to transportation, manufacturing, retail, and customer service.

How Does AI Impact Job Automation?

Listed below are examples of AI’s impact on job automation.

- Repetitive and Routine Tasks: AI automation takes over repetitive and routine tasks that require little to no creativity or decision-making. These tasks include data entry, document processing, basic customer inquiries, and repetitive manufacturing processes.

- Data Analysis and Insights: AI algorithms analyze vast amounts of data and extract meaningful insights at a speed and scale that humans cannot achieve. It impacts jobs that involve data analysis, market research, trend analysis, and financial modeling.

- Customer Service and Support: AI-powered chatbots and virtual assistants handle basic customer inquiries, provide support, and assist with transactions. It reduces the need for human customer service representatives in handling routine queries.

- Manufacturing and Logistics: AI-enabled robots and automation systems take over tasks in manufacturing and logistics, such as assembly line operations, packaging, sorting, and inventory management. Manual labor in these areas is reduced.

- Transportation and Delivery: AI technology is used to automate tasks in transportation and delivery, such as autonomous vehicles and drones for transportation and robotic systems for warehouse management and order fulfillment.

- Healthcare Diagnostics: AI systems analyze medical images, such as X-rays and CT scans, to assist in disease diagnosis. AI algorithms detect abnormalities and assist in making diagnoses that impact jobs in radiology and medical imaging.

- Legal Research: AI-powered systems analyze legal documents, case histories, and precedents to assist lawyers in legal research and document preparation. It automates parts of legal research tasks.

- Financial Services: AI algorithms automate tasks in financial services, such as fraud detection, risk assessment, algorithmic trading, and customer service. It impacts jobs in areas such as fraud investigation, risk analysis, and basic financial advisory roles.

AI technology changes or gets rid of some jobs, but it creates new ones that require human skills in system development, AI training, data analysis, and making decisions. It spurs roles centered on ethical AI implementation and changes the nature of work by automating repetitive tasks and boosting productivity.

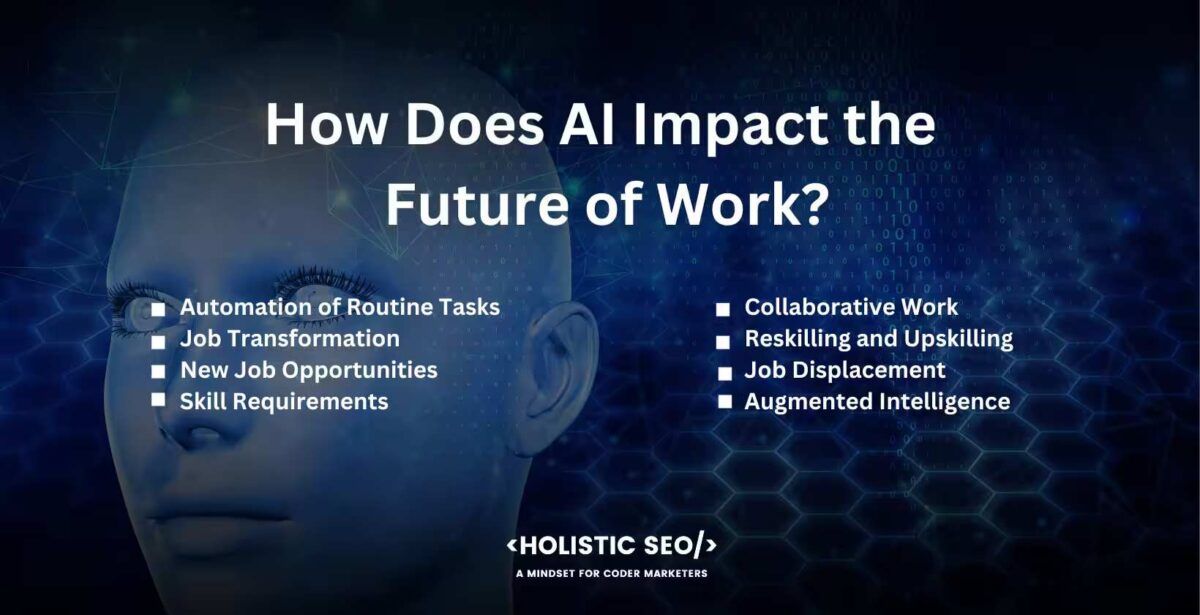

How Does AI Impact the Future of Work?

Listed below are some ways in which AI impacts the future of work.

- Automation of Routine Tasks: AI automates repetitive and routine tasks, freeing up human workers to focus on more complex and creative work. It increases efficiency and productivity in various industries.

- Job Transformation: AI technology changes job roles and responsibilities. Tasks within jobs are automated, leading to the evolution of existing roles and the emergence of new ones that require AI-related skills, such as data analysis, AI system development, and ethical AI governance.

- New Job Opportunities: AI automates certain tasks and creates new job opportunities. AI implementation requires skilled professionals who develop, manage, and optimize AI systems. New roles include AI trainers, AI ethicists, data scientists, and AI solution architects.

- Skill Requirements: The rise of AI emphasizes the need for a new set of skills. Workers need to adapt and acquire skills that complement AI capabilities, such as critical thinking, creativity, and complex problem-solving, and human-centered skills such as empathy and emotional intelligence.

- Collaborative Work: AI facilitates collaboration between humans and machines. Workers increasingly collaborate with AI systems, leveraging their capabilities to augment their work and make more informed decisions.

- Reskilling and Upskilling: AI technology evolves, and reskilling and upskilling become crucial to keep up with changing job requirements. Workers need to undergo continuous learning and training to adapt to AI-driven workplaces and acquire the necessary skills.

- Job Displacement: AI creates new opportunities, but it leads to job displacement in certain sectors. Jobs that are highly repetitive or easily automated are at risk. The overall impact on employment depends on how AI is integrated and the extent to which new jobs are created.

- Augmented Intelligence: AI enhances human capabilities rather than replaces them. Augmented intelligence involves using AI systems as tools to amplify human decision-making, productivity, and creativity, leading to more efficient and effective work processes.

It is important to note that the future impact of AI on work is complex and multifaceted. It opens up opportunities for innovation, creativity, and higher-value work while it automates certain tasks and changes job landscapes. Effective adaptation, lifelong learning, and proactive workforce policies are crucial to navigate and thrive in the AI-driven future of work.

What Are the Potential Benefits of AI?

There are three potential benefits of AI include automation and productivity, enhanced decision-making, improved personalization, and user experience.

Automation and Productivity AI allows to handle boring and repetitive tasks, which make people more productive and efficient. AI lets people focus on more difficult and creative jobs by taking care of routine tasks. It saves time and money, which lets businesses use their resources more efficiently and encourages new ideas.

AI for Better Decision-Making looks at huge amounts of data, finds trends, and gives intelligent insights to help people make decisions. AI algorithms help businesses use data to make choices that are more accurate and timely. Its systems process and analyze data faster and on a larger scale than humans can, which helps businesses learn useful things and make smart choices.

AI’s improved personalization and user experience are made possible by analyzing user data, tastes, and behavior. It is used in systems that suggest products, movies, or other material based on what each person wants. Chatbots and virtual assistants that are driven by AI offer personalized service and suggestions to customers. It improves user experience and boosts customer satisfaction by understanding and adapting to user requirements.

These possible gains are only possible if AI systems are built and used responsibly and ethically. It is important to address concerns about privacy, bias, and transparency to make sure AI has a good effect.

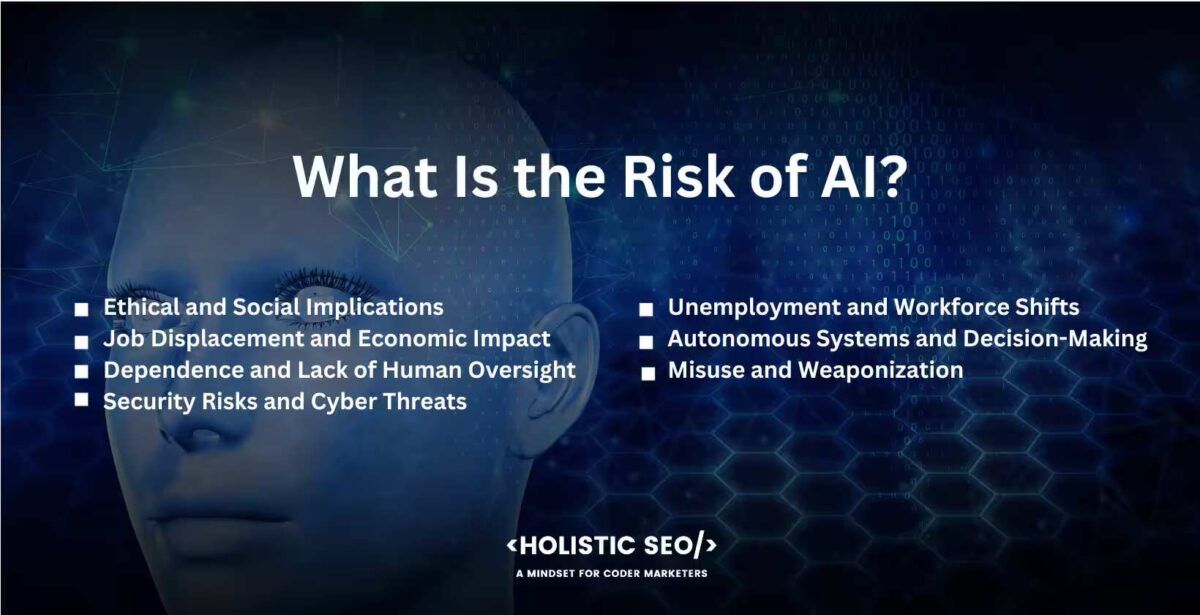

What Is the Risk of AI?

Listed below are some risks with AI.

- Ethical and Social Implications: AI brings ethical questions about privacy, bias, and transparency. AI systems need a lot of data, which raises privacy concerns and makes it possible that data to be misused. Discrimination or unfair results are made worse by biased data or algorithms. It is important to make sure that AI is developed ethically and to address these issues.

- Job Displacement and Economic Impact: It takes jobs away from some industries because AI is used to automate tasks. Jobs that involve repetitive or routine jobs are particularly vulnerable. Workers need to change their skills and learn new ones so they adapt to the changing job market. The effects of job loss and wage inequality on the economy need to be looked at.

- Dependence and Lack of Human Oversight: Putting too much faith in AI systems without enough human control lead to unintended results. AI algorithms work based on the facts they are taught, and they make mistakes or act in a biased way. Human input and judgment are needed to make sure that AI is used responsibly, to confirm outputs, and to stop harm from happening.

- Security Risks and Cyber Threats: AI systems become targets for bad things. There are security risks to hostile attacks, which happen when AI models are changed to make them give wrong results. It makes complex cyber threats, such as automated hacking or social engineering.

- Unemployment and Workforce Shifts: The labor market changes, which leads to jobless or the need to switch jobs when AI is used. It is important to help affected workers make a smooth shift and find new jobs or learn new skills.

- Autonomous Systems and Decision-Making: AI systems, such as self-driving cars or drones, that make decisions on their raise questions about responsibility and liability. Making sure that autonomous AI systems are safe and act ethically is a big problem.

- Misuse and Weaponization: Autonomous weapons and the bad use of AI in cyber warfare raise worries about wars and the possibility that humans lose control over how important decisions are made.

These risks require a collaborative effort involving governments, organizations, researchers, and policymakers. Developing robust regulations, establishing ethical guidelines, promoting transparency, and fostering responsible AI practices are essential for harnessing the potential of AI while mitigating its risks.

1. Job Displacement

Job displacement refers to the potential risk of AI and automation replacing human workers in various industries and job roles. Automated tasks lessen the demand for human labor in such sectors.

It’s a risk when the adoption of AI and automation leads to a significant reduction in the demand for human workers in certain job roles. As machines become capable of performing tasks more efficiently and cost-effectively, organizations choose to automate processes, resulting in potential job losses for humans. It disrupts the job market and creates challenges for displaced workers in finding new employment opportunities.

The introduction of self-checkout systems in retail stores is an example of job displacement. Customers scan and pay for their purchases without the need for a human cashier with the help of self-checkout machines. Automation reduces the need for cashier positions, leading to potential job losses in the retail sector. They result in reduced employment opportunities for human cashiers while self-checkout systems improve efficiency and convenience for customers.

2. Control and Accountability

Control and accountability refer to the potential risks associated with the lack of human oversight and control over AI systems. It involves the question of who is responsible for the actions and decisions made by AI systems.

Control and accountability become a risk when AI systems operate autonomously without sufficient human oversight or intervention. It’s challenging to attribute responsibility and determine accountability for those actions if AI systems make decisions or take actions that result in negative outcomes.

The utilization of autonomous vehicles is one example. There is a need to establish guidelines and regulations for liability and accountability in the event of accidents or collisions involving autonomous vehicles as self-driving cars become advanced. Determining who is responsible, the manufacturer, the software developer, or the vehicle owner, is complex due to the autonomous nature of the vehicle’s decision-making.

3. Bias and Discrimination

Bias and discrimination refer to the potential risks of AI systems exhibiting behavior or perpetuating discrimination based on race, gender, or other protected characteristics. Bias arises from the data used to train AI models or from the algorithms themselves.

Bias and discrimination become a risk when AI systems produce unfair or discriminatory outcomes, reflecting and amplifying existing societal biases. It leads to discriminatory decisions or actions by AI systems if the training data used to train AI models contains biases or if the algorithms themselves aren’t designed to mitigate biases.

Facial recognition systems have been found to exhibit biases in recognizing individuals with darker skin tones or from underrepresented groups. These biases lead to misidentifications or a higher rate of false positives, impacting individuals’ rights and potentially perpetuating racial discrimination.

4. Security Threats

Security threats refer to the potential risks of AI systems being targeted by malicious actors or used as tools for cyber attacks. AI systems are vulnerable to hacking, adversarial attacks, or exploitation for malicious purposes.

Security threats become risks when AI systems are compromised, leading to unauthorized access, data breaches, or manipulation of the AI’s behavior. Adversarial attacks manipulate AI models to produce incorrect outputs or make them vulnerable to exploitation.

Deepfake technology is an example of a security threat associated with AI. Deepfake videos use AI algorithms to manipulate or create videos that falsely depict individuals saying or doing things they never did. It poses risks for misinformation, identity theft, and potential reputational damage.

5. Privacy and Security Concerns

Privacy and security concerns refer to the risks associated with the collection, use, and protection of personal data by AI systems. AI often analyzes and processes large amounts of data, raising concerns about data privacy and security.

Privacy and security concerns become risks when AI systems collect and store personal data without proper consent or security measures. Mishandling sensitive personal information leads to privacy violations, identity theft, and other security incidents if it is exposed to unauthorized individuals or used for unethical purposes.

Social media platforms and online service providers that use AI algorithms to analyze user data for targeted advertising or personalized recommendations face privacy concerns. Data breaches or unauthorized access to personal information highlight the risks associated with AI systems that handle large volumes of sensitive user data.

6. Enhanced Efficiency

Enhanced efficiency is the potential benefit of AI in improving productivity, speed, and accuracy in various processes. AI systems automate tasks, optimize operations, and provide real-time insights, leading to increased efficiency.

Enhanced efficiency becomes a risk when AI systems replace human workers without proper support or transition plans. Rapid automation and optimization lead to job displacement or create imbalances in the workforce if human workers are not adequately reskilled or provided with alternative employment opportunities.

Warehouse automation using AI-powered robots and autonomous systems significantly enhances efficiency in inventory management, order fulfillment, and logistics. It results in job displacement for warehouse workers who previously performed these tasks manually while automation improves speed and accuracy.

These risks are not inherent to AI itself but are associated with the development, deployment, and management of AI systems. Mitigating these risks requires responsible AI practices, ethical guidelines, and appropriate regulatory frameworks.

Can AI-Optimized Hardware Assist in Natural Language Processing Tasks?

Yes, AI-optimized hardware is built and optimized for AI tasks, such as natural language processing (NLP). The hardware is made to speed up the computations needed for NLP jobs. It gives faster processing and better performance compared to traditional hardware.

Natural Language Processing jobs such as language modeling, sequence generation, text classification, sentiment analysis, and machine translation require complex calculations. These jobs require substantial computational power and efficient processing of large amounts of text data.

Graphics Processing Units (GPUs) and Tensor Processing Units (TPUs) are examples of AI-optimized hardware that are made to handle the parallel processing needs of AI tasks. They are great at working with matrices and can speed up NLP jobs by a lot, making them more efficient and letting them get results faster.

GPUs are originally made for graphics-intensive applications and are perfect for NLP jobs because of things such as their ability to process data in parallel. They are good at doing large-scale matrix multiplications and neural network calculations, which speed up NLP methods and models.

Google made TPUs, especially for AI workloads. They are very good at processing neural networks and are being used more and more in NLP apps. TPUs are great at jobs such as understanding natural language, making new languages, and machine translation. They are faster and use less energy compared to traditional CPUs.

Natural Language Processing jobs can get more computing power, better efficiency, and faster processing times by using AI-optimized hardware. It lets more advanced NLP models be made, improves the accuracy of language processing jobs, and lets bigger datasets be handled. AI-optimized hardware is a key part of making NLP and AI systems in general better at what they can do.

Can AI-Optimized Hardware Accelerate Deep Learning Algorithms?

Yes, hardware optimized for AI speeds up deep learning algorithms. Complex computations are required by deep learning algorithms, which necessitate significant computing power and memory resources. Deep learning models’ training and inference processes are accelerated by AI-optimized hardware, such as Graphics Processing Units (GPUs) and Tensor Processing Units (TPUs).

Deep learning algorithms are accelerated by AI-optimized hardware in multiple ways, including parallel processing, increased computational capacity, efficient memory management, and customized architecture.

Algorithms for deep learning entail extensive matrix operations and neural network computations. GPUs and TPUs, AI-optimized hardware excels at parallel processing. Deep learning algorithms are executed more quickly when multiple processors or processing units perform calculations simultaneously.

The purpose of AI-optimized hardware is to provide a high level of computational power.

GPUs designed for graphics processing have evolved to become potent general-purpose processors capable of deep learning. TPUs are designed for AI workloads to offer even greater performance and energy efficiency, thereby accelerating deep learning computations.

Models based on deep learning require a substantial amount of memory for data storage and processing. Hardware optimized for AI is designed to manage memory operations efficiently, enabling quicker data access and transfer within deep learning algorithms. The optimization reduces memory-intensive computations’ associated latency and bottleneck issues.

TPUs and other AI-optimized hardware frequently incorporate specialized architectures designed for deep learning workloads. These architectures are designed to optimize the computations specific to deep learning algorithms, resulting in quicker and more efficient processing. The AI-optimized hardware’s customized architecture enables it to execute deep learning computations with greater speed and efficiency than traditional CPUs.

Utilizing AI-optimized hardware significantly accelerates training and inference times for deep learning algorithms. The acceleration enables researchers and practitioners to train larger and more complex deep learning models, process large datasets, and achieve results more quickly. AI-optimized hardware is essential to the development and scalability of deep learning, allowing breakthroughs in disciplines such as computer vision, natural language processing, and speech recognition.

How Does AI-Optimized Hardware Contribute to The Development of Autonomous Vehicles?

There are five ways that AI-optimized hardware aids in the development of autonomous vehicles including processing power, deep learning models, edge computing, energy efficiency, and reliability and durability.

Processing power for autonomous vehicles which use a combination of sensors, such as cameras, lidars, radars, and ultrasonic sensors, to perceive the surrounding environment. AI-optimized hardware helps process and fuse data from these sensors in real time. Specialized processors handle the high data throughput and perform complex sensor fusion algorithms, allowing the vehicle to accurately detect and understand its surroundings.

Deep learning models are used in autonomous vehicles for tasks such as object detection, pedestrian recognition, lane detection, and traffic sign recognition. AI-optimized hardware, such as GPUs and TPUs, accelerates the training and inference of these deep learning models. The parallel processing capabilities of GPUs and TPUs allow faster and more efficient execution of complex deep learning algorithms, enhancing the perception capabilities of autonomous vehicles.

Edge computing is responsible for making real-time decisions and controlling the actions of autonomous vehicles. AI-optimized hardware provides the computational power needed to process sensor data, analyze the environment, and make driving decisions. Specialized processors and AI accelerators ensure low latency and high-speed processing, allowing the vehicle to react quickly to changing conditions on the road.

Energy efficiency on autonomous vehicles, especially electric ones, need to manage energy consumption effectively. AI-optimized hardware executes complex calculations more efficiently, saving energy and extending vehicle range.

Reliability and durability are paramount in autonomous vehicles. AI-optimized hardware provides redundancy in critical systems, allowing for fail-safe mechanisms and redundancy in processing units. Redundant hardware ensures that even in the event of a failure in one component, the vehicle’s safety-critical functions still operate reliably.

Autonomous vehicles efficiently process vast amounts of sensor data, execute complex AI algorithms in real time, and make intelligent driving decisions by leveraging AI-optimized hardware. The contribution allows the development of safer and more reliable autonomous vehicles that navigate complex environments autonomously.

How Does AI-Optimized Hardware Address Power and Energy Efficiency Concerns?

AI-optimized hardware helps address power and energy efficiency concerns by designing systems specifically to handle AI’s high computational needs while minimizing energy consumption.

AI-optimized hardware, such as application-specific integrated circuits (ASICs), Graphics Processing Units (GPUs), and Tensor Processing Units (TPUs), is engineered to run AI algorithms more efficiently than general-purpose CPUs. These specialized hardware components handle more calculations per watt of power, therefore providing high performance while keeping energy consumption lower. They manage it by performing parallel computations, which accelerate AI computations while consuming less energy compared to serial computations used in traditional CPUs. There are some ways AI-optimized hardware addresses these issues, including ASICs, GPUs, TPUs, Neuromorphic Computing, Edge AI, and Software Optimizations.

ASICs are customized for a particular use rather than intended for general-purpose use. ASICs are designed to perform specific AI tasks such as matrix multiplications (common in AI computations) very efficiently, thus saving energy in the case of AI.

GPUs, which were originally developed for rendering video game graphics, are now widely used in AI computations due to their ability to handle multiple computations simultaneously. The parallel processing ability of GPUs makes them far more efficient at AI tasks than CPUs.

TPUs are designed specifically for AI tasks. They optimize the performance per watt of power by maximizing the number of computations that are performed simultaneously, improving energy efficiency.

Companies and research institutions are developing neuromorphic chips designed to mimic the human brain’s neural architecture. These chips provide massive energy efficiency improvements for AI computations.

Edge AI involves running AI algorithms on the devices themselves (such as smartphones, IoT devices) rather than in a centralized cloud-based server. It reduces the amount of data that needs to be sent over a network, saving energy by doing so.

Software optimizations such as pruning, quantization, and knowledge distillation contribute to energy efficiency by reducing the computational resources required for AI tasks. AI-optimized hardware, combined with effective software techniques, improves the energy efficiency of AI systems, a crucial factor given the increasing use of AI in today’s digital world.

What Role Does Memory Architecture Play in AI-Optimized Hardware?

Memory architecture plays a critical role in AI-optimized hardware by providing fast and efficient access to data, which is crucial for AI computations. It ensures that data is processed quickly and in parallel, allowing for high-performance AI algorithms.

Memory architecture in AI-optimized hardware is designed to deliver high bandwidth, low latency, and efficient data movement. Its quick retrieval and storage of data support the massive computational demands of AI algorithms. Memory architecture serves five functions in AI-optimized hardware, including bandwidth and latency, parallelism and vectorization, caching, memory hierarchy, and memory bandwidth optimization.

AI computations heavily rely on accessing and manipulating large amounts of data. Memory architectures with high bandwidth and low latency are crucial for efficiently feeding data to AI models. High-bandwidth memory (HBM) technology gives faster data transfer rates compared to traditional memory architectures, allowing faster data access for AI computations.

AI-optimized hardware employs memory architectures that facilitate parallel processing and vectorized operations. These architectures allow for the simultaneous retrieval and processing of multiple data elements, accelerating AI computations. GPUs utilize memory structures that support parallel memory access, allowing efficient vectorized operations, for example.

Caches play a vital role in AI-optimized hardware by storing frequently accessed data closer to the processing units, reducing latency, and improving overall performance. Efficient caching mechanisms, such as multi-level caches with intelligent data prefetching, help minimize memory access delays and optimize data availability for AI computations.

AI-optimized hardware incorporates multi-level memory hierarchies, with different memory types serving different purposes. On-chip memory such as scratchpad memory gives low-latency access and high bandwidth for frequently accessed data, while off-chip memory such as dynamic random-access memory (DRAM) provides larger capacity at the cost of slightly higher latency, for example. Proper management of the memory hierarchy is crucial for balancing performance, energy efficiency, and cost in AI hardware systems.

AI algorithms involve extensive data movement, requiring efficient utilization of memory bandwidth. Memory architectures designed for AI-optimized hardware employ techniques such as memory compression, memory bandwidth partitioning, and data locality optimizations to maximize the utilization of available memory bandwidth.

Memory architecture in AI-optimized hardware ensures fast and efficient data access for AI computations. High bandwidth and low latency, and supports parallelism, caching, and memory hierarchy optimizations, all of which are essential for achieving high-performance AI algorithms.

Can AI-Optimized Hardware Facilitate Real-Time Decision-Making?

Yes, AI-optimized hardware facilitates real-time decision-making. AI-optimized hardware, such as specialized processors and accelerators, is designed to handle the computational demands of AI algorithms efficiently. These hardware solutions, such as graphics processing units (GPUs) or Tensor Processing Units (TPUs), provide processing capabilities and high computational throughput. It allows for the rapid execution of AI algorithms, allowing fast data processing and analysis for real-time decision-making.

Real-time decision-making requires low latency, meaning the time it takes for data to be processed and a response to be generated. AI-optimized hardware, with its specialized architectures and optimized designs, reduces latency by minimizing data transfer bottlenecks, optimizing memory access, and providing efficient processing. Low-latency hardware allows quick decision-making based on real-time data inputs.

AI-optimized hardware incorporates memory architectures that prioritize efficient memory access. Quick and efficient access to data stored in memory is crucial for real-time decision-making. The memory hierarchy, caching mechanisms, and optimization techniques in AI-optimized hardware help minimize memory latency and allow rapid data retrieval, enhancing real-time decision-making capabilities.

Real-time decision-making requires processing multiple data streams or running complex algorithms simultaneously. AI-optimized hardware, designed with parallel processing capabilities, handles multiple computations in parallel. It allows the hardware to efficiently process data from multiple sources and perform complex calculations concurrently, facilitating real-time decision-making.

AI-optimized hardware considers energy efficiency considerations, which are important for real-time decision-making systems deployed in resource-constrained environments. Energy-efficient hardware designs help minimize power consumption while maintaining high computational performance, ensuring sustained real-time decision-making capabilities without excessive energy requirements.

Real-time decision-making systems quickly process data, analyze it in real time, and create timely and informed judgments by exploiting the computational capacity, low latency, optimized memory access, parallel processing capabilities, and energy efficiency afforded by AI-optimized hardware. AI-optimized hardware is crucial in enabling the speed, efficiency, and responsiveness necessary for real-time decision-making applications across various domains.

How Does AI-Optimized Hardware Handle Parallel Processing for AI Workloads?

AI-optimized hardware can execute AI tasks in parallel processing in five different ways, including using multiple processor units, SIMD and SIMT architectures, parallel memory access, tensor processing units, and task partitioning and scheduling.

Multiple processing units work simultaneously on different parts of a computational task, enabling parallel processing. Each processing unit operates independently, executing instructions in parallel and sharing the overall computational workload.

SIMD (Single Instruction, Multiple Data) and SIMT (Single Instruction, Multiple Threads) are architectural designs commonly found in AI-optimized hardware. SIMD architecture allows a single instruction to be applied to multiple data elements in parallel, while SIMT architecture extends the concept by applying the same instruction to multiple threads of execution. These architectures efficiently handle parallel processing by performing the same operation on multiple data elements simultaneously.

Parallel memory: AI-optimized hardware incorporates memory architectures that facilitate parallel memory access. It allows for concurrent retrieval and storage of data from memory, enabling efficient data processing across multiple processing units. AI-optimized hardware minimizes data transfer bottlenecks and maximizes the utilization of processing units by optimizing memory access.

Tensor Processing Units (TPUs) are specialized AI accelerators designed specifically for deep learning workloads. They employ highly parallel architectures that excel at performing matrix operations, which are fundamental to many AI tasks. TPUs feature a large number of processing cores and dedicated memory, enabling efficient parallel processing of tensor-based computations.

Task partitioning and scheduling: AI-optimized hardware implements task partitioning and scheduling mechanisms to effectively distribute computational tasks across processing units. These mechanisms analyze the workload and allocate tasks to different cores or units, balancing the processing load and optimizing resource utilization. Task partitioning and scheduling help maximize parallelism and improve overall performance.

AI-optimized hardware efficiently supports parallel processing for AI workloads by employing multiple processor units, SIMD/SIMT architectures, parallel memory access, and specialized AI accelerators such as TPUs. It allows for faster execution of AI algorithms, improved performance on large-scale computations, and the ability to process and analyze data in parallel, accelerating the progress and capabilities of AI systems.

Are There Any Challenges Associated with Integrating AI-Optimized Hardware Into Existing Systems?

Yes, the integration of AI-optimized hardware into extant systems presents obstacles. There are obstacles associated with integrating AI-optimized hardware into existing systems, such as compatibility, scalability, software and development environment, data and model migration, cost and return on investment, and system integration and testing.

Compatibility is considered when integrating AI-optimized hardware into existing systems. The hardware has specific interface requirements, software dependencies, or driver needs for seamless integration with the existing infrastructure. Compatibility issues arise when hardware interacts with other components or software applications that are modified or optimized to effectively utilize the hardware.

Scalability issues arise when integrating AI-optimized hardware into existing systems. The equipment requires additional power, refrigeration, or space. Complying with the hardware’s scalability requirements necessitates complex and expensive modifications to the existing system architecture and infrastructure.

Software frameworks, libraries, and programming models are frequently employed in AI-optimized hardware. Existing software applications must be modified or new software components must be developed to integrate the hardware. It requires knowledge of the hardware’s associated software tools and development environment, necessitating additional training or resources.

Existing data and models are migrated to the new hardware environment before integrating AI-optimized hardware. The procedure involves assuring data compatibility, optimizing data storage, and adapting existing models to exploit the hardware’s capabilities. Time-consuming and requiring careful planning and testing ensures a seamless transition; migrating massive datasets and models requires careful planning and testing.

Integrating AI-optimized hardware incurs substantial expenses, including hardware acquisition, infrastructure adjustments, software development, and staff training. It is crucial to evaluate the return on investment (ROI) to substantiate the integration effort. The costs of integrating the hardware into their existing systems are weighed against the prospective benefits.

Incorporating AI-optimized hardware into existing systems requires extensive system integration and testing. Debugging, troubleshooting, and refining the integration process are required to ensure compatibility, performance optimization, and stability in the integrated environment. Validating the functionality and efficacy of the integrated system requires rigorous testing.

These obstacles necessitate meticulous planning, technical proficiency, and collaboration between hardware providers, software developers, and system administrators. It is necessary to assess compatibility, scalability, software requirements, data migration, cost implications, and integration testing to ensure the successful integration of AI-optimized hardware into existing systems.

What Are the Future Trends and Advancements in AI-Optimized Hardware?

Future developments and trends in AI-optimized hardware are influenced by seven factors, including specialized AI accelerators, quantum computing, neuromorphic computing, in-memory computing, heterogeneous integration, energy efficiency and sustainability, and co-design of the hardware and software.

The development of specialized AI accelerators continues to advance, catering to specific AI workloads. Companies are investing in the design and production of dedicated chips and accelerators that offer higher computational power and energy efficiency for AI tasks. These specialized accelerators, such as Neural Processing Units (NPUs) or domain-specific architectures, allow faster and more efficient AI processing.

Quantum computing holds promise for AI optimization and solving complex AI problems. Quantum processors perform parallel computations and leverage quantum principles to speed up AI algorithms significantly. Quantum computing has the potential to revolutionize AI-optimized hardware by allowing faster and more powerful calculations for AI workloads while still in the early stages.

Neuromorphic computing aims to mimic the structure and functionality of the human brain, leading to highly efficient and brain-inspired AI hardware. Neuromorphic chips and architectures are designed to process data in a more biologically inspired manner, allowing energy-efficient and parallel processing for AI tasks. These advancements have the potential to revolutionize AI hardware, particularly in cognitive computing and pattern recognition.

In-memory computing leverages memory-centric architectures to perform computations directly within memory, minimizing data movement and improving processing speed. AI-optimized hardware incorporating in-memory computing techniques significantly enhances the efficiency of AI algorithms by reducing latency and optimizing memory access. The approach has the potential to revolutionize the memory hierarchy in AI hardware.

The integration of different types of processing units, such as CPUs, GPUs, and AI accelerators, continues to evolve. Heterogeneous integration aims to leverage the strengths of each processing unit for specific AI workloads, optimizing performance and energy efficiency. Advanced packaging and interconnect technologies allow tighter integration of diverse processing units on a single chip or within a single package, enhancing the capabilities of AI-optimized hardware.

Future advancements in AI-optimized hardware are increasingly focused on energy efficiency and sustainability. Hardware designs prioritize energy-efficient architectures, power management techniques, and optimization algorithms with the growing demand for AI processing and the need to address environmental concerns. Aiming to achieve higher performance per watt and reduce the carbon footprint of AI systems.

Hardware and software co-design plays a crucial role in optimizing AI performance. Close collaboration between hardware designers and software developers results in better integration, code optimization, and algorithm-hardware co-optimization. The synergy ensures that AI algorithms are developed with hardware considerations in mind, unlocking the full potential of AI-optimized hardware.

These future trends and advancements in AI-optimized hardware hold the potential to drive breakthroughs in AI capabilities, enabling faster and more efficient AI processing, improved energy efficiency, and the ability to tackle increasingly complex AI workloads.

Can AI-Optimized Hardware Assist in Natural Language Processing Tasks?

Yes, AI-optimized hardware assists in Natural Language Processing (NLP) tasks. There are four ways in how AI-optimized hardware assists in NLP tasks, including computational power, parallel processing, memory, and precision optimization.

NLP tasks use complex computations, such as language modeling, sequence generation, and deep neural network architectures. AI-optimized hardware, such as Graphics Processing Units (GPUs) or Tensor Processing Units (TPUs), provides high computational power to handle these tasks efficiently. These hardware solutions are designed to excel at parallel processing and matrix operations, which are fundamental to many NLP algorithms. NLP tasks are executed faster and more efficiently by using the computational power of AI-optimized hardware.

NLP tasks use large datasets or complex models and benefit from parallel processing. AI-optimized hardware is specifically designed to handle parallel computations effectively. GPUs and TPUs, for example, have multiple cores or processing units that perform computations simultaneously, enabling faster execution of NLP algorithms. Parallel processing capabilities help accelerate the training and inference processes of NLP models, allowing for quicker results and improved performance.

NLP tasks require efficient memory utilization, as they use processing and analyzing large amounts of text data. AI-optimized hardware incorporates memory architectures and optimization techniques that enhance memory access and data movement. It improves the efficiency of NLP algorithms by reducing memory latency and optimizing memory usage. Efficient memory management allows faster retrieval and processing of data, leading to improved performance in NLP tasks.

AI-optimized hardware allows for precision optimization in NLP tasks. Its algorithms operate effectively with reduced precision representations, such as low-precision or mixed-precision arithmetic. AI-optimized hardware supports these precision optimizations, which require fewer bits to represent data and computations. Reduced precision formats improve the computational speed and efficiency of NLP algorithms, making them well-suited for AI-optimized hardware.

NLP tasks benefit from higher performance, improved efficiency, and faster processing times by exploiting the computational capacity, parallel processing capabilities, memory optimization, and accuracy optimizations given by AI-optimized hardware. AI-optimized hardware plays a crucial role in advancing the capabilities of NLP systems, allowing more accurate language understanding, better language generation, and enhanced natural language interaction.

What Are the Implications of AI-Optimized Hardware in The Field of Artificial Intelligence?

There are key implications of AI-optimized hardware in AI, including increased computational power, enhanced efficiency, accelerated training and inference, scalability and flexibility, advancement in AI algorithms, and innovation and discovery.

AI-optimized hardware, such as specialized processors, GPUs, and TPUs, provides increased computational power specifically designed for AI workloads. It allows for faster and more efficient execution of AI algorithms, enabling the processing of large datasets and complex models. The increased computational power provided by AI-optimized hardware expands the possibilities of AI applications, enabling advanced analytics, deep learning, and real-time decision-making.

AI-optimized hardware is designed with efficiency in mind, providing higher performance per watt. AI-optimized hardware provides quicker and more energy-efficient calculations by exploiting parallel processing, memory optimization, and specialized designs. Efficiency is crucial for deploying AI systems in resource-constrained environments, improving energy sustainability, and reducing operational costs associated with AI computations.

AI-optimized hardware dramatically accelerates AI model training and inference procedures. Deep learning model training using AI-optimized hardware, such as GPUs or TPUs, drastically lowers model training time. AI-optimized hardware provides quicker and more efficient inference, allowing AI models to analyze data and generate predictions in real-time or near real-time. The acceleration improves the scalability and usability of AI systems, allowing them to be used in a variety of fields.

AI-optimized hardware allows AI systems to scale and adapt. It allows the effective processing of large-scale AI workloads, meeting the growing needs of data-intensive AI applications. AI-optimized hardware is designed for parallel processing and gets expanded easily by adding more processor units or specialized accelerators. Scalability facilitates the creation of AI systems capable of processing vast volumes of data, supporting concurrent users, and adapting to changing AI needs.

AI-optimized hardware propels AI algorithm and model advances. AI-optimized hardware’s enhanced processing power and efficiency allow the study of more sophisticated AI systems, advanced deep learning methods, and optimization algorithms. Researchers and practitioners create and implement increasingly advanced AI models, resulting in advancements in computer vision, natural language processing, recommendation systems, and autonomous systems.

AI-optimized hardware opens new possibilities for innovation and discovery in the field of AI. It allows researchers, scientists, and developers to push the boundaries of AI by exploring novel algorithms, developing cutting-edge applications, and tackling complex problems. The availability of high-performance AI hardware fosters breakthroughs in AI research, leading to advancements in fields such as healthcare, finance, manufacturing, and scientific discovery.

AI-optimized hardware plays a critical role in advancing the capabilities of AI systems, enabling faster computations, improved efficiency, scalability, and breakthroughs in AI research and applications. The implications of AI-optimized hardware extend across various industries, driving innovation and enabling the realization of the full potential of Artificial Intelligence.

How Is Artificial Intelligence Used in AI Robotics?

There is evidence of how AI is used in AI robotics, including intelligent decision-making, perception and sensing, motion planning and control, machine learning for robot learning, human-robot interaction, autonomous navigation and mapping, and collaborative robotics.

Intelligent decision-making allows robots to make intelligent decisions based on real-time sensor data and predefined rules or learned models. Reinforcement learning allows robots to learn through trial and error and optimize their actions based on rewards and penalties. It lets robots adapt and improve their decision-making capabilities over time.

Perception and sensing AI techniques, such as computer vision and sensor fusion, empower robots to perceive and understand their environment. Computer vision algorithms analyze visual data from cameras and allow robots to recognize objects, detect obstacles, and navigate their surroundings. Sensor fusion combines data from multiple sensors, such as cameras, lidars, and radars, allowing robots to create a comprehensive and accurate representation of their environment.